Accelerating and refining UX testing with agentic AI and multi-agent systems

How an experiment with Synthetic Personas shows that AI's value lies not in replacing entire processes, but in solving specific bottlenecks.

Here at Taqtile, we approach onboarding not just as an integration process, but as an invitation to hands-on experimentation with new technologies. The result of this process, Ysabella Andrade's experiment using Synthetic Personas for UX testing reinforced one of the most important aspects of working with the technology:

AI solutions generate results when they start from specific bottlenecks, growing with each result achieved, not by automating entire processes.

In Ysabella's approach, rather than trying to replace the full UX research cycle, she targeted a critical stage: the intermediate refinement between the initial prototype and the first usability test. As a result, the product gains maturity before going to a test with real people, bringing speed and value to the process — since the real-user test can then focus on more strategic questions.

In the article below, the process is detailed, covering the engineering of the agents used and the learnings about the limits and potential of this approach for designers and PMs.

And if you want to learn more about AI adoption in large enterprises, check out the Taqtile Radar, a report with the key learnings and market data on technology adoption in 2025.

Synthetic Personas: Practical Learnings for UX Testing

By Ysabella Andrade.

Concept/Prompt: Ysabella Andrade.

What if you could test your product with dozens of "users" before recruiting a single real participant?

It sounds counterintuitive, but that is exactly what synthetic personas powered by AI make possible.

During my onboarding at Taqtile, I was given a challenge: understand how synthetic personas could optimize the refinement of the final design/product process before testing with real users.

Our goal was to use the onboarding space — a time for understanding processes and building skills — to test whether AI could anticipate hypotheses and identify usability flaws and business rule gaps before taking the product to real validation.

Below, I detail the process and the key learnings:

1. The Scenario: the B2B logistics journey

For a prototype to make sense, it needs to solve a real problem using real data. The product tested in our analysis was a B2B e-commerce platform for consumer goods distribution. This business aims to fully digitize the purchasing process through its official channels, primarily the mobile app.

In this product, the "hard user" is the company representative who serves other businesses. We were not dealing with a standard shopping cart, but a complex journey that needed to accommodate a wide variety of logistics scenarios.

The challenge, then, was to simplify this dense process without reducing the informational layer, while maintaining the confidence of both buyers and sellers. With a tight timeline, we saw an opportunity to test synthetic personas to mature the prototype and understand the possibilities and limits of AI in UX research: will it replace real people? Does it generate valuable insights with reliable evidence? And how should a good test be structured in this format?

2. The process: prompt engineering and terminal security

To ensure technical precision and data security, we did not use common interfaces (such as ChatGPT web, Gemini web, etc). We operated via Gemini CLI with an API Key directly in the terminal.

This choice gave us greater control over variables and ensured that sensitive business data was processed in a controlled environment, preventing it from being used as training data by the model.

We structured our AI with two complementary autonomous AI agents, creating a data analysis "pipeline":

The Researcher Agent: its mission was to synthesize — it consumed raw interview transcripts, Looker metrics, and business journeys to generate actionable personas. No invented data: if the information was not in the original material, the agent reported the gap.

The Senior UX Agent: this one acted as an interview simulator. It received the personas created by the first agent and ran a Roleplay. Through it, we could question the prototype from different perspectives, switching between response modes (Roleplay, Critique, or Mixed).

The final artifact: from the two agents above, our synthetic persona was born — created by Agent 1 and used by Agent 2. With this artifact, we could anticipate what a real person would potentially say about our questions and screens.

Above is a portion of the "researcher" agent's prompt, with the command description, result format, and response limitations (built in portuguese)

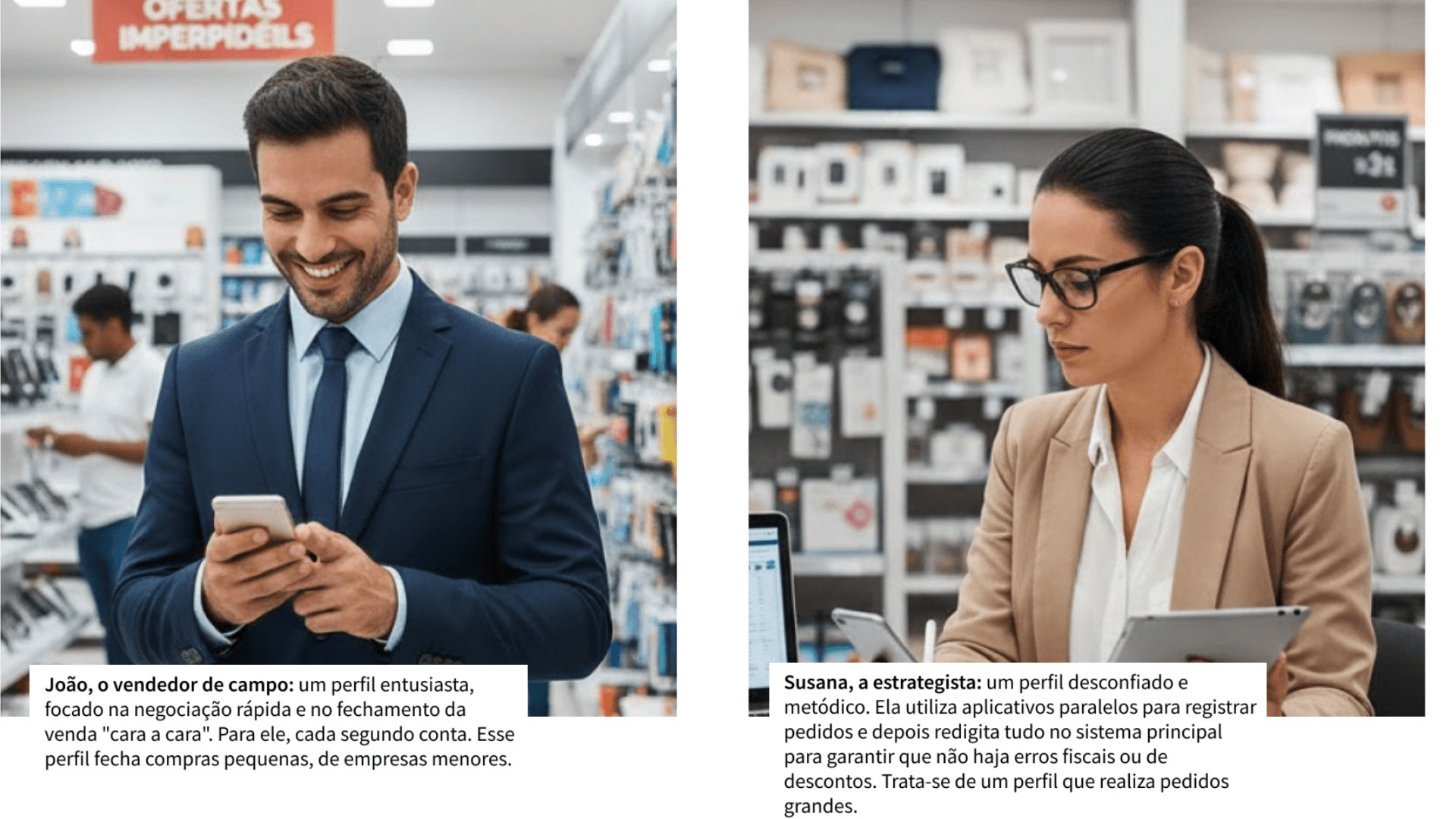

2.1. The synthetic personas: João and Susana

To make the test effective, we selected two profiles that embody the different user journeys:

After configuring the researcher agent (to create the personas from the data) and the Senior UX agent (to simulate conversation with them), I ran the roleplay mode command in the terminal and the AI-powered usability test came to life. It was in this synthetic dialogue that we identified the potential synthetic personas have for spotting improvement points in the app flow!

2.2. What we learned about the use of synthetic personas?

At the end of the process, despite arriving at important learnings that led us to a far more mature flow proposal, the biggest insights were about how to work with AI in design.

2.2.1. The persona is a reflection of the data used

I learned that a synthetic persona is a "time capsule." If the source material (transcripts, business information, research, etc.) is outdated or incomplete, the persona will struggle to evaluate significant changes based on real data — since that data simply does not exist in those scenarios. In other words, there is a high chance the results will not reflect current user behavior or current business objectives.

Because of this, it is important to emphasize that AI does not replace the need for continuous field research; it only amplifies the analysis of what has already been collected. So, to use synthetic personas effectively, it is valuable to have a continuous discovery habit embedded in your team to make your results more accurate.

2.2.2 Accuracy depends on the prompt structure

The test revealed that AI tends to drift if questions are too generic. To extract value, you need to direct the persona with explicit references.

Example of one of the questions (with directions and references) sent to the simulation (built in portuguese)

For example, instead of asking "What do you think of the app's checkout?", we asked "Based on your frustration with a lack of fiscal transparency, what is your opinion on this discount grouping in the final step on this page (reference the folder/document where the page is located)?". Structured, conditional questions generate detail-rich responses; loose questions generate confusing ones.

2.2.3 Roleplay as a way to quickly surface critiques

The biggest advantage was the agility to run synthetic A/B tests. We could rapidly contrast the reactions of two opposing profiles (the enthusiast vs. the cautious one). This allowed us to identify logic flaws and usability improvements in minutes — something that would take days in a traditional recruitment cycle.

Additionally, thinking about future iterations, making the entire process automated with granular prompts would make surfacing improvements even more agile and evidence-backed!

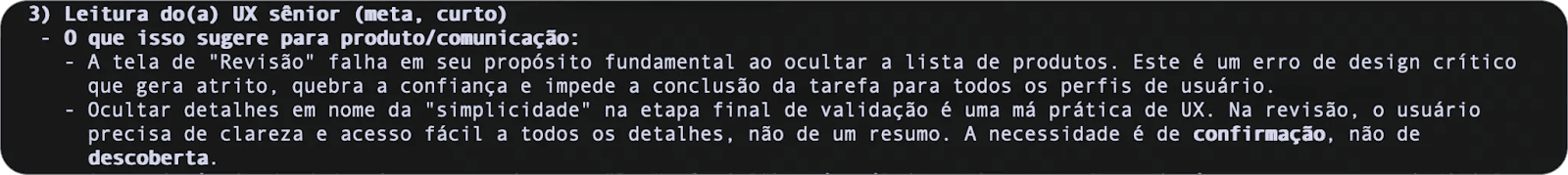

2.2.4 Synthetic analysis as a support layer

Using the agent in "mixed mode" (roleplay + UX reading) allowed the AI to not only simulate the user, but also make a technical critique of the simulation itself. This helped map data gaps: the AI itself would flag when it did not have enough evidence in the repository to answer confidently, or would directly cite which data source it used to respond.

This opened doors to exploring tests in a multi-agent structure suited for AI agents for business: splitting test objectives across more than one agent (for example, one to analyze previous recordings to capture moments of doubt and emotion; another to analyze screens and surface design critiques; and so on).

Example of the analysis the "Senior UX" agent produced after a simulation (built in portuguese).

2.2.5 Accelerated learning curve about the business

An unexpected benefit was how synthetic personas helped me learn about the client's business much faster. Many questions I had about the business and its users I was able to clarify by asking the persona directly, since it was fed with all of the product's previous materials.

I later confirmed those points with the team in a synchronous meeting. In that meeting, I confirmed that what the persona had surfaced was consistent with the information the project team held.

I saw in this an opportunity to resolve doubts that previously would have required scheduling meetings with project colleagues and depending on everyone's availability. Now, I can "ask" the persona first — especially in situations where time is a constraint.

Of course, always considering the AI's limitations: I ask about topics for which we have data, and I always confirm critical points with the team afterward, before acting.

3. AI to refine, humans to validate

A fundamental learning from this onboarding was the demystification of AI's role. The use of synthetic personas is a refinement tool. It allows the designer to arrive at validation with humans with a far more mature prototype, having already "cleaned up" obvious logic and flow errors.

In other words, it does not eliminate the need for validation with real users — it optimizes the product before real tests, so that the focus of validation can be on the points that are truly critical to the business.

Want to explore how multi-agent systems can accelerate your design and product process? Learn how we develop generative AI solutions to solve specific bottlenecks in enterprises.

4. The Boundaries of AI

Although the use of synthetic personas accelerated our design cycle, methodological maturity requires recognizing where the technology still encounters barriers (and what we can or cannot iterate on). During the process, we mapped limitations that define AI's role as a refinement tool — not a replacement:

4.1 Data dependency

A synthetic persona is only as deep as the data used to feed it. If there are gaps in the original materials — or if the data is outdated (which was our case) — the AI will not be able to predict accurate reactions. For example, in our simulation, the synthetic user frequently focused on a problem in their journey that had already been resolved, but the real-user tests had been conducted before that improvement. In this scenario, we understood that the persona reflects the past to try to anticipate the future — which is why constant refreshing of real data matters.

4.2 The "surprise" and emotional factor

AI's strength is in logic and pattern detection, but it still falls short in replicating human unpredictability. Complex emotions — such as the hesitation felt when seeing a new button or screen — are things the simulation could not capture. As the NN Group study on the topic notes: human behavior is complex and context-dependent, and synthetic users cannot capture that complexity.

4.3 Qualitative precision vs. quantitative measurement

During the tests, we noticed that the analysis leans more toward the qualitative. While AI excels at explaining why a flow is confusing, measuring task time in a synthetic environment still lacks sufficient validation beyond the AI's own suggestion. The simulation answers the "what" and the "why" — but the "how much" still belongs to real usability testing.

4.4 The hallucination risk

Without a rigorous prompt structure (and even with one, without a multi-agent setup), AI can "drift" or attempt to fill information gaps with generic assumptions about the data. Organizing the synthetic persona's responses followed by the UX agent's analysis was crucial to separate potential evidence from inference — keeping the output "clean" of responses generated without context or reliable data.

Ultimately, recognizing these limitations does not diminish the value of the methodology. On the contrary, it gives the designer the clarity needed to know exactly when to trust the simulation, when to iterate the method, and when it is time to go to the field and listen to the real user.

Leading with intention

During my onboarding, I learned that Taqtile has lived alongside technological transformations since its founding. Living through these changes, I came to understand that technology changes — but it is people who lead the advances.

With AI, it is no different! As this experiment showed, synthetic personas can compress weeks of learning into a few days of simulation. However, it is not the technology itself, but how we use it that determines the success or failure of a project… or even an experiment.

Using organized agents for each stage gave us the agility to fail fast, learn, and adjust before investing time in recruitment and real interviews.

Curious to learn more? Here at Taqtile, we live daily with the challenge of implementing Artificial Intelligence in large corporations. Follow our LinkedIn page, where we are always sharing new learnings, techniques, studies, and strategies involving the technology!