We're investing wrong: the real problem with agentic AI adoption in enterprises

How the patterns of the successful 5% reveal that AI agents for business that deliver results start small, with a focus on incremental process automation

Concept/Prompt: Danilo Toledo · Image: Midjourney

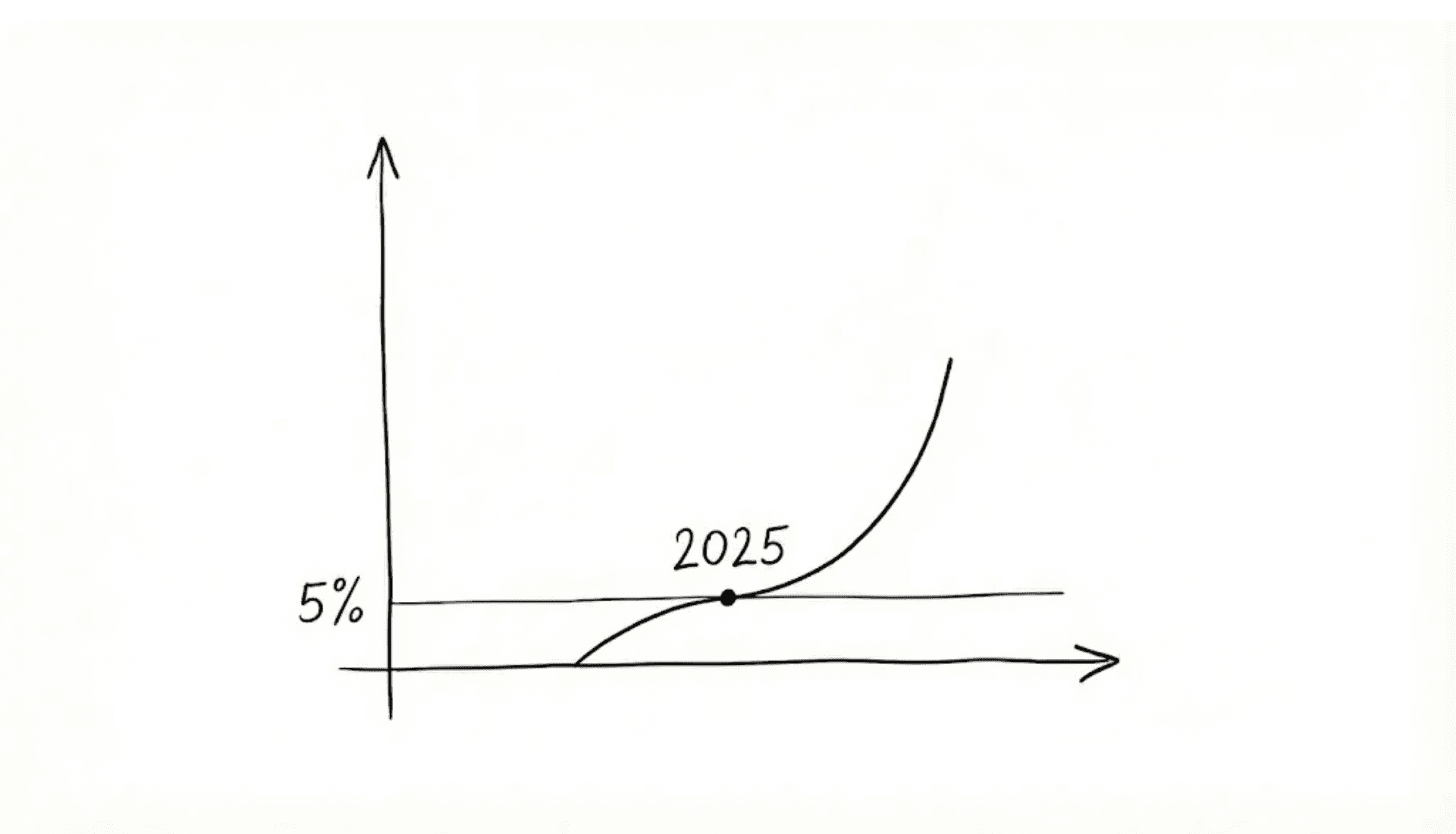

I cannot recall another study making as much noise this year as MIT's Project NANDA, The GenAI Divide. The AI skeptics did not waste any time. They republished the headline everywhere: '95% of GenAI pilots in enterprises delivered zero return in 2025.'

For those of us still in the room — those who understand that agentic AI in enterprises is here to stay — the mission is far more pragmatic: ignore the noise and focus on the signal. What replicable patterns do the 5% of successful cases have in common?

Among the MIT findings, three points resonated most strongly with what we have been seeing at Taqtile. These were hard-learned lessons, perhaps avoidable had we paid closer attention to previous waves of innovation. Either way, 2025 marked a natural inflection point, and it is time to share what we have learned so we can all move forward stronger.

Detailed below, the three key points are:

GenAI MVPs need to be even smaller than we expected

Treat AI agents like new hires. And set promotion criteria

Understand where GenAI excels (and where it struggles)

1. GenAI MVPs need to be even smaller than we expected

We have grown used to building MVPs focused on digital transformation. Start small, validate, expand. But what surprised us is that GenAI demands even smaller starting points than traditional digital applications — and this comes down to two contradictory reasons:

Because it is a more flexible and adaptable technology, delivering value with Generative AI can happen much faster.

At the same time, because it is less predictable, the potential for failure multiplies just as quickly.

Teams that are generating real value with AI agents are not trying to automate full, complex processes from day one. Those projects tend to drag on — trapped in endless edge-case management.

The projects that actually deliver results — like the 5% in the MIT study — start with something so focused it is almost embarrassing to justify. And that is exactly where the real work begins: educating and aligning expectations. It is harder than it sounds to manage stakeholders who, dazzled by GenAI's potential, expect the technology to solve fundamentally different problems all at once.

Using sales as an example: building an autonomous agent that manages the entire pipeline, from first contact to close, is a challenge that — while possible — will likely trap your team in an endless cycle of exception adjustments. And it will take far longer to generate results than an agent that simply qualifies and warms up leads. Less sexy, sure, but far smarter to start with an AI that identifies who is showing buying signals and hands those prospects — prepared and ready — to your human team. Your salespeople stop wasting hours on leads that will not convert. They focus on closing. The value appears immediately.

The MIT research confirms this pattern: solutions focused on "small but critical workflows" reach US$ 1.2 million in annualized value within 6 to 12 months. These projects typically take 90 days from pilot to full implementation. And broad, complex process targets? Nine months or more — often with nothing to show for it.

"Start smaller than feels comfortable. Prove value. Expand from results."

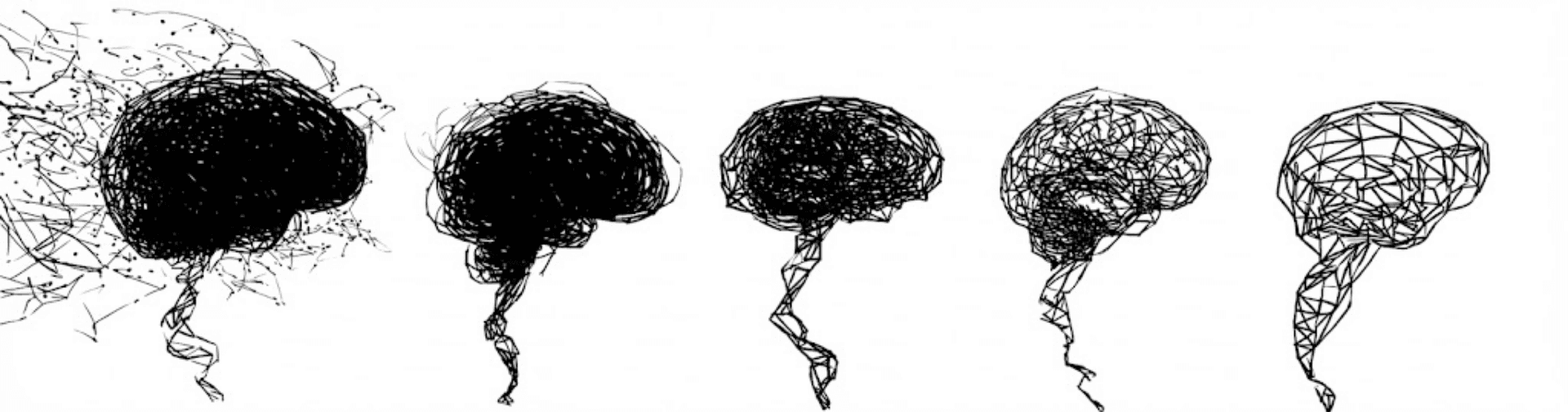

AI Agents Guided Autonomy. Concept/Prompt: Danilo Toledo Image: Midjourney + Gemini

2. Treat AI agents like new hires. And set promotion criteria

"Your best collaborators did not arrive on day one with full autonomy. They earned it."

The same principle applies to AI agents. Yet most companies grant too much autonomy too fast, and watch the agents fail. The opposite is equally risky: overly restricted agents, waiting for perfection, may never deliver value. That is a stagnation problem.

An approach proposed by researchers at the University of Washington — Levels of Autonomy for AI Agents (Feng, McDonald & Zhang, 2025) — has been decisive in Taqtile's projects. It is a framework of increasing autonomy levels that describes five roles a user can take when working side by side with an autonomous AI agent: operator, collaborator, consultant, approver, and observer. The key point: the agent does not skip steps. It is promoted as it delivers results.

Levels of Autonomy for AI Agents. Feng, et al. Preprint 2025

Continuing with the sales workflow example: in practice, this means starting with agents that shadow your salespeople. These AI co-pilots work side by side with your team, drafting messages for human review, suggesting next actions, and learning from corrections. They are in training.

As they prove their value — as time saved increases, message edit rates drop, and team confidence grows — you promote them. They move on to handling real customer interactions, initially with oversight, then with growing independence.

The secret lies in clear operational metrics from day zero. Not subjective metrics about model accuracy — business metrics.

Time saved per salesperson.

Edit rate of AI-generated messages.

Conversion rate of AI-qualified leads.

These metrics indicate when an agent is ready to take on more responsibility.

Agents that are born focused earn team trust faster. There are fewer edge cases to adjust, fewer failures that erode confidence. More than half of the leaders interviewed by MIT pointed to tools that "break on edge cases and fail to adapt" as the main reason their pilots never made it to production.

Want to see this approach in action? See how Taqtile used multi-agent systems to accelerate UX testing and mature prototypes before real tests.

3. Understand where GenAI excels (and where it struggles)

The first wave of GenAI applications created an illusion: that everything could be solved with prompt engineering and retrieval-augmented generation tricks (a.k.a. RAG). Reality showed there is no silver bullet: purely generative systems attacking complex workflows failed repeatedly.

And here is something we see constantly: every workflow you think is simple reveals surprising complexity once you try to automate it — especially in high-volume contexts, such as customer-facing applications.

What the MIT 5% also seem to understand: successful projects look more like traditional software applications than Generative AI magic.

What we have seen here is that the winning architecture is not pure GenAI. It is hybrid — deterministic flows to ensure reliability combined with generative AI where it genuinely excels. GenAI is remarkable at understanding and generating natural language, handling ambiguity, and adapting to context. But business logic? Predictable rules? Edge-case handling? Traditional engineering still wins.

In our projects at Taqtile, the best results come from this combination. Specialized agents with controlled autonomy manage context better, respect business rules with greater precision, and scale with far more reliability than systems that try to solve everything with GenAI alone.

The recipe for production success is simple, but vital: build a solid foundation of deterministic flows and use generative AI in a targeted way, only where it performs well.

From noise to signal: what it takes

The journey from cycles of strategic paralysis and wasted investment toward profitable Generative AI integration demands adopting a pragmatic engineering mindset:

Extreme Focus: start ultra-small and attack critical workflows, proving immediate value in 90 days, rather than chasing full automation.

Governance: grant guided autonomy, treating AI agents like new hires who must earn trust and responsibility based on clear business metrics — not subjective model accuracy.

Architecture: prioritize the hybrid model, using deterministic flows to ensure reliability and reserving GenAI only for where it genuinely excels (language and ambiguity).

This is the real difference between noise and signal, and this is the inflection point of 2025. It is time to leave behind the "silver bullet" expectation and build incrementally, with discipline.A

AI Agents in enterprises: 2025 as an inflection point. Concept/Prompt: Danilo Toledo Image: Gemini.

The scale of the risk of not taking these lessons into 2026

The risk of not putting these lessons into practice now is tangible, and the MIT research points to a worrying pattern.

"In the coming quarters, many companies will find themselves locked into commercial contracts with solutions that, while impressive, will not solve their real problems."

The scenario is predictable. We have seen this movie before. Salespeople from major technology platforms, under pressure to show returns on the investments made to force AI into their products, will arrive sharp and armed with impressive demos. But ones that do not integrate with your real workflows.

And the companies that do not replicate the patterns of the MIT 5% will discover — too late — that these systems fail in most of their specific business scenarios. They will lose money, time, and the peace of mind of their decision-makers.

The MIT-validated shortcut: the right approach

At the same time, the research identifies an interesting shortcut for acceleration: approach, not technology.

The organizations that stand out establish partnerships with enterprise AI consulting specialists who help them understand their own workflows first, and only then adapt AI implementation services that integrate with existing flows — not the other way around.

The data backs this up: projects executed with external partners are 2x more likely to succeed and reach production 3x faster.

Why do external partners accelerate results? We are biased, admittedly, but we see at least three decisive reasons:

Genuine Multidisciplinarity: teams combining engineering, design, strategy, and research from Day 1 — not siloed departments meeting in status calls.

Cross-Industry Pattern Recognition: partners who have worked across dozens of implementations know which approaches work in which contexts. They have already made the mistakes internal teams are about to make.

Continuous Updates: dedicated teams researching GenAI, tracking models, frameworks, and best practices daily — without competing with internal priorities.

The answer we found at Taqtile: AI Sprint

The MIT research indicates it is not the technology that separates success from failure. The combination of profiles, methodologies, and capabilities needed to develop successful GenAI initiatives tells us this is not merely a matter of tool selection — it is a matter of approach.

The answer we found at Taqtile lies in rethinking widely validated methodologies. We adapted the Design Sprint — a proven innovation framework from the digital transformation era — for the challenges of GenAI. The result is our AI Sprint. This approach, by being ultra-focused, hybrid, and built on controlled autonomy, ensures that AI agents are destined to deliver value from Day 1.

Ultimately, GenAI is a journey of continuous learning, and the knowledge of those building it is our most valuable asset. If you are one of those still in the room, navigating the challenges of GenAI implementation in your organization, we would love to continue this conversation.

If you want to learn more about how we apply these principles in practice, discover our AI Sprint framework.